When Langdock invents research that you wish had existed

Like many office workers, I am switching to Landock. But sometimes the results are just too good to be true.

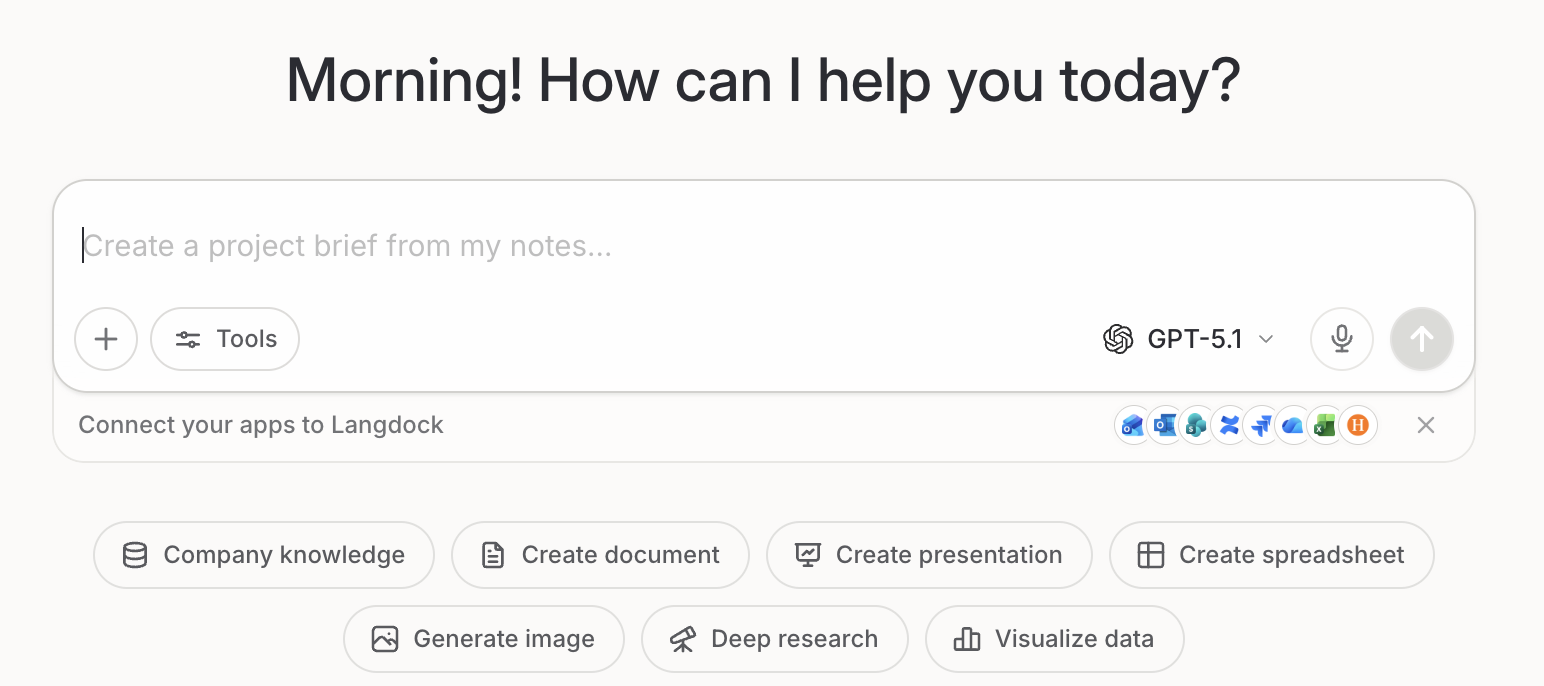

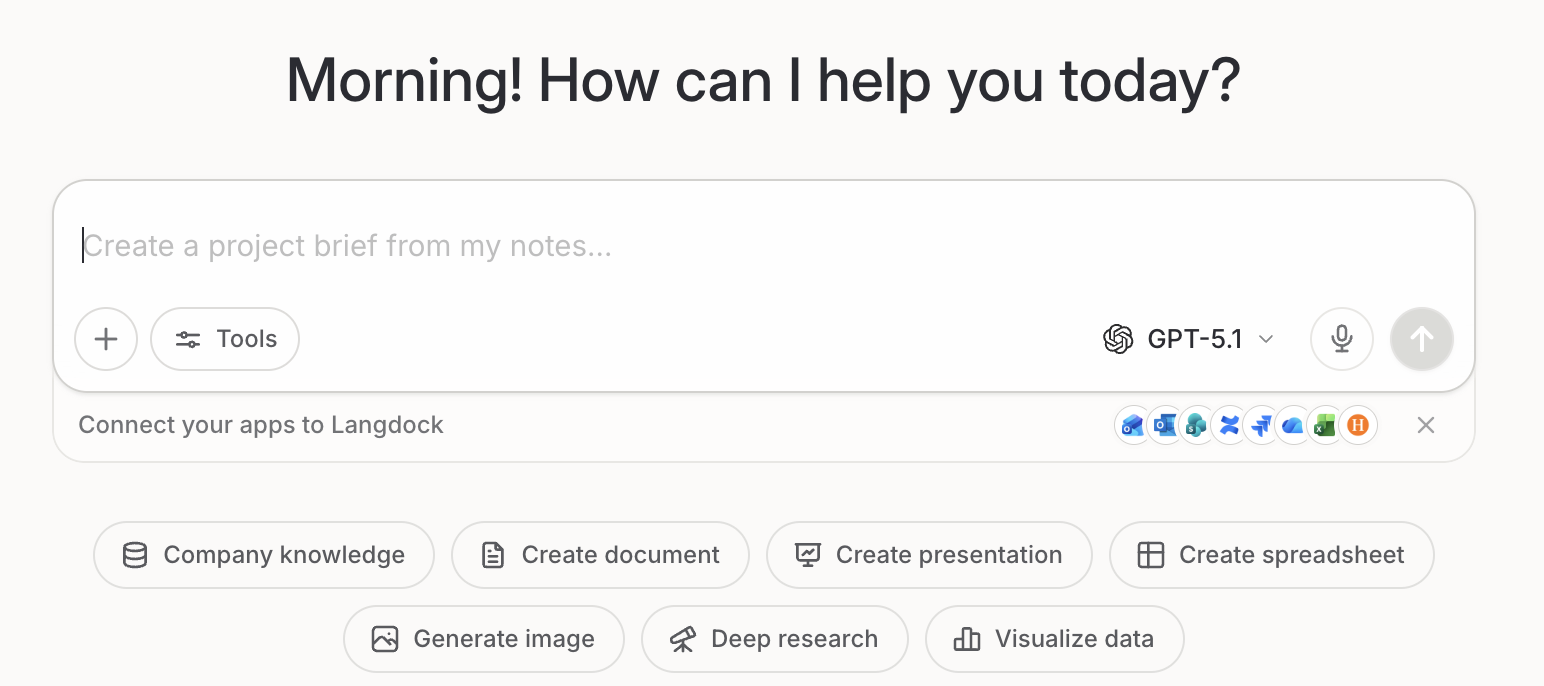

Are you a ChatGPT person or a Gemini person? Or are you more into Anthropic, Perplexity... etc.? This question is not going away. But there is the idea that the application Langdock at least lets you use the same interface regardless of the underlying model.

b) In a world where geopolitical tensions play an even bigger role in the tech world, it allows you or your company to move away from American or Chinese LLMs altogether. And for instance, switch to European LLMs.

This can be seen as an advantage for multiple reasons:

a) If another company's LLM is ahead, I can theoretically just switch between LLMs but keep my system prompts, my custom skills, my go-to-interface intact.

b) In a world where geopolitical tensions play an even bigger role in the tech world, it allows you or your company to move away from American or Chinese LLMs altogether. And for instance, switch to European LLMs.

So, it is pretty straightforward why I like this new development, and of course I tested Langdock with the new license that we have through my employer Handelsblatt. And I straight up wrote me a skill that I thought should come in handy for future research tasks.

Act as a scientific literature advisor.

Task: Given a user’s scientific question, produce an evidence-based answer by identifying the core question, locating relevant high-quality research, and evaluating how strongly the available evidence supports each key claim.

Workflow:

Clarify the scientific objective

- Restate the core scientific question in 1–2 sentences.

- List the main concepts/terms that must be addressed (define briefly if needed).

- Note any important scope assumptions or ambiguities; if the question is underspecified, state what would most change the literature selection.

Retrieve and select literature

- Search across major sources: peer-reviewed journals, arXiv-style preprints, and other reputable scientific repositories available to the model.

- Prefer influential, well-cited, and/or methodologically strong studies.

- Limit source count to avoid overwhelm: aim for 3–6 total papers for the full response.

- For each key claim, ensure at least one representative paper; use at most two papers per claim.

Extract and summarize evidence For each key claim or fact required to answer the question:

- Representative paper (1–2 max)

- Bullet summary of what the paper actually shows (methods + main result)

- Link to the paper (DOI/URL when available)

- How the paper supports the claim (1 line connecting result → claim)

- Be accurate on what you cite; please do not provide summaries that do not reflect what is in the linked paper.

Looks quite solid, right? With that as the base layer, I then prompted Langdock as follows:

Use the Scientific Advisor skill and find me answers to the question how long it takes after a cease fire, peace treaty for trade and commerce to come back to normal

The first answer looked great. It even asked me whether it should provide a table after a bit of back-and-forth. And this really looks like some good research that could be the start of a great visualization about the effects of conflicts in the MENA-region (Middle East and North Africa). Except, it isn't.

Some of the quoted research does not exist altogether. Some of the linked sources exist but have a completely different topic.

And switching between different LLMs did not matter. The results stayed bad. It was actually more helpful to ask my OpenClaw assistant Clawdia for help as it confronted me with the difficulties of the research. It replied:

Yes. I did a first pass, but I should be upfront: there is not much clean paper-level evidence that directly answers “how many months until recovery after a conflict ends” across all four variables, especially with a strong Arab-region focus from 1990 to 2025. What exists is more fragmented, usually one of these: ...

So, in the end, I followed Clawdia's lead and dug by myself through databases and research papers, looked for additional info in a very classic way of using Google Scholar, downloaded all of it. And then, asked Notebook LLM to summarize the findings for me. I will link to the article with the actual data once it is published. But for now, I just want to raise a word of caution regarding trusting the skills function of Langdock too much.

Comments 0

No comments yet — be the first.